History of Containerization

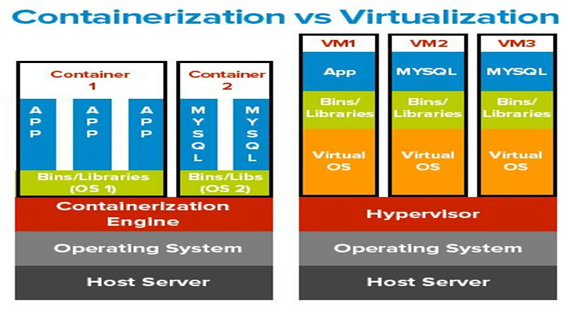

To understand the beginning of Containerization, let’s go back in mid-90’s where we used to have dedicated servers to run a single application. If we want to run 10 applications then we need to purchase 10 different servers to host them. To overcome this problem the concept of Virtualization comes into existence. Virtualization is the technique where we used to host the Guest operating system on top of the Host operating system.

In the beginning, this technique brings the revolution in IT, as it gives developers to run multiple OS on the single host machine. But as we know everything in this world is having their shortcomings as well. While we are able to run different virtual machines on a single server, but in reality, it results in the degradation of the system performance. As it utilizes more CPU, RAM, Hard disks of the host machine. So, this is where the concept of “Containerization” emerged.

Understanding the concept of Containerization

The term “Containerization” in Information Technology means to run an application or a service in an isolated environment. So that the running application doesn’t affect the other applications running in other containers. Containerization is itself a type of Virtualization, but it doesn’t require to have new operating system each and every time we create a new virtual machine. Instead, it uses a new feature of using the host machine OS as a base to do all the things, hence no additional OS is required whenever a new Container is launched or created. Containers are light-weighted and faster than the virtual machines, and can easily be launched in a fraction of seconds, as it only hold the application specific binaries as well as related libraries.

Advantages of Containerization over Virtualization

Following are some of the upper hands of Containerization over Virtualization,

- Containers are fast & boots quickly as compare to VM’s, as it uses Host OS and shares the relevant libraries.

- Containers properly utilize the host resources, unlike virtual machines.

- Containers have independent software packages, libraries, binaries to run an application.

- Containerization engine is responsible for handling the containers.

Below diagram will explain the things with more clarity, and then we will discuss the most popular Containerization tool Docker.

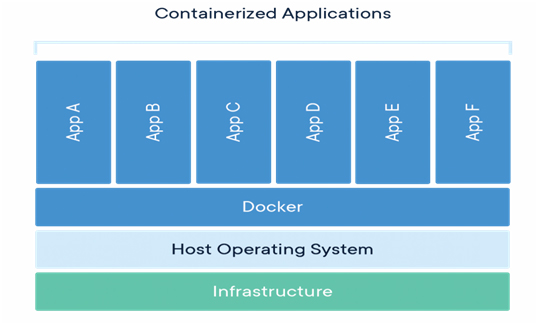

What is DOCKER ?

Docker is an open-source containerization tool using which we can run multiple applications in an isolated environment, using Docker Containers. By having a docker container we can easily scale it to QA/DEV environments to Production as well and everywhere we have the same isolated container that we have created and tested. Check below diagram to know more about how different applications can be run on a single host at the same time

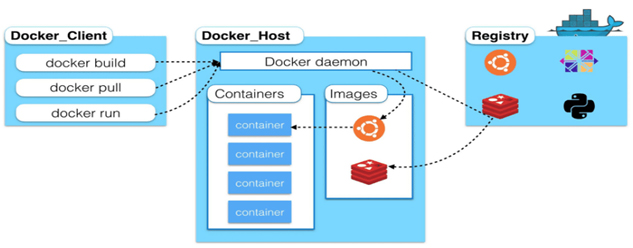

Understanding the Docker Architecture

Following are the components that make the Docker architecture. Let me describe these components one by one,

- Docker Client:Using client we can write commands to interact with the Docker Daemon (it is the service that is responsible for running the docker engine). We can manage all the docker activities from here, I;e, command line interface.

- Docker Host/Machine: It is the main machine/host where the Docker Daemon runs. This machine contains all the Docker containers, docker images that we have created or pulled from the internet.

- Docker Hub/Repo/Registry:It is a global repository for accessing all types of Docker images available publicly on the internet. We can pull these images and can also modify these to a new image as per our needs and finally can place them here in the Docker Repo for future uses. You can also see the diagram for more understanding,

Note:- Docker is not only the containerization platform available in the market. But it’s the most popular one among the IT organizations.

So, why do we need Docker?

The below points will help to answer this question,

- For faster development process one should go for it.

- Handy application encapsulation

- The same behavior the of container you will see on Local Machine/ QA/ Dev/ Prod servers.

- Very easy to start, stop, restart the containers.

- In case, it is required to run a container on 1000 of servers, then it can be done in a fraction of seconds, which means it is easy to Scale.

Docker Container vs Virtual Machine

Below table list some of the main differences between Docker and VM’s,

| Virtual Machine | Docker Container |

|---|---|

| Less Efficient | More Efficient |

| Each VM run their own OS | Containers share a host OS |

| Hardware/Software Virtualization | OS Level Virtualization |

| OS level isolation | Process-level isolation |

Use Cases of Docker Containers

In below table, I have listed popular use cases where Docker containers can be very useful,

| Use Case | Docker |

|---|---|

| Infrastructure | Yes |

| Container Environment Hosts | Yes |

| Databases | Yes |

| Mobile Apps | Yes |

| Microservices | Yes |

| Web Applications | Yes |

Summary

In this article, we have learned how the need of Containerization arises and how they have solved the issue of having multiple hosts to run the applications on a single host. So, now the question comes, whether “Containerization is better than Virtualization or not?” The answer is, a big YES. Below listed points will make it more clear why I am recommending Container’s over VM’s,

- Containers have Faster startup time. A containerized application usually starts in a couple of seconds. Virtual machines could take a couple of minutes.

- Containers provide a better resource distribution. If a resource is free and is being not utilized by a container then that resource can be easily used by another container.

- Containers have direct hardware access unlike Virtual machines

- Containers don’t require an additional OS to work, they use the same OS installed on a host machine.

Contact usto know more how Serigor can help you with Docker Containerization and how it can help your business and customers.